I led the UX work in understanding consumer requirements on the robot initiative through Perceptual Computing at Intel. I visited customers and partnered with product managers to understand the business requirements and technical boundries.

Brainstorming sessions were held with stakeholders to generate viable usage scenarios. I collaborated with a market research agency to test these scenarios with 900 participants. Here are a few examples of the scenarios:

Based on testing results and customer features, we decided on two top scenarios to built out storyboards and interaction flows. These were then used to help determine features and priorities (MVPs) for our first robot prototype.

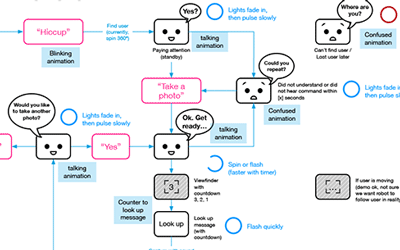

Partnering with a developer, we created "Hiccup".

2 main motivations to build our own prototype:

(1)

test out features of our software and middleware and their combined usages

(2) to

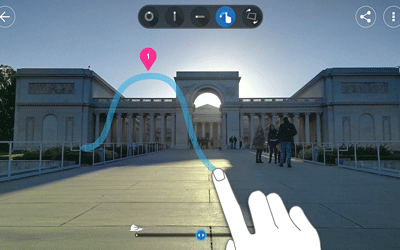

observe people's behaviors and interactions by answering some questions:

Our first version of the prototype was 3 feet tall because our customers were creating robots at that height. We quickly learned that people do not like their photos taken from a low upward angle. We hacked together a V2 by attaching a stick to the turtlebot structure and taping the photo-capture camera on top of a stick (as you can see in the video).

The captured photos, at that new height, were clearly more appealing. This led to the idea of incorporating an actuator that can raise and lower the height of the photo-capture camera while still maintaining the overall form factor of a shorter robot.

But another problem arose, all the photos showned people looking down towards the preview screen rather than at the camera.

We discussed various physical changes, but decided to test with UI, sound, and LED lights first– to see if one or a combination of indicators can solve this problem by drawing attention and reminding users to look up at the camera right before capture.

The next step to this prototype was to build a skin around the turtlebot and brainstormed on themes. While a visual designer was sketching ideas, I thought more about how to best compose the internal structures of the robot for placement of various pieces of equipment.

YaYa Shang © 2016 Built with love and [googled] html/css | Email me | Linkedin